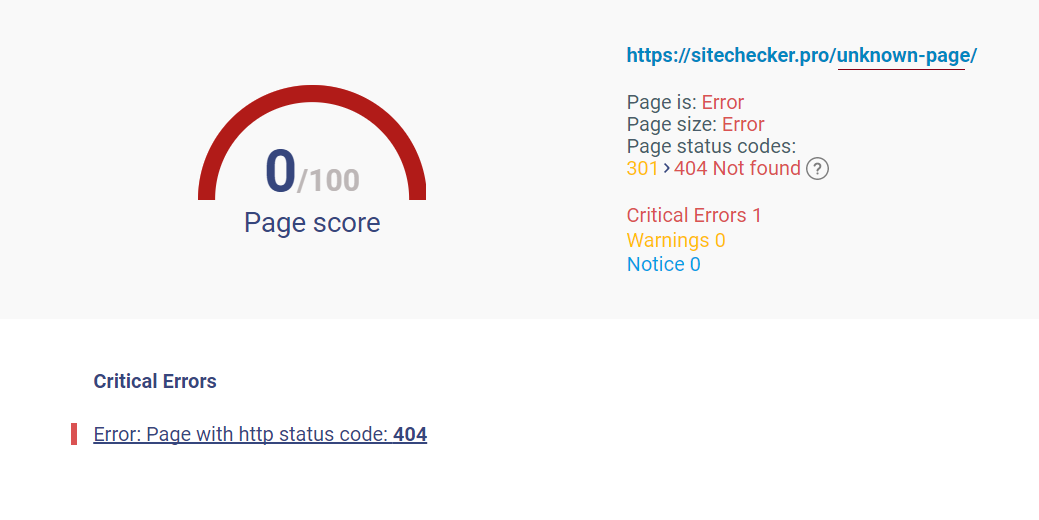

If you don’t have a robots.txt file, the server will return a 404 and Googlebot will simply continue to crawl your site. However, you only need a robots.txt file if you don’t want Google to crawl certain pages. Robots.txt Fetch ErrorsĪ Robots error means that the Googlebot cannot retrieve your robots.txt file from /robots.txt. This means that Googlebot can connect to your site but can’t load the page.

Server errors usually mean that your site is taking too long to respond, so the request times out. Note: You can click on all screenshots below to view at a larger size.ĭNS errors are a major problem – this means that Googlebot couldn’t connect with your domain due to a DNS lookup or DNS timeout issue. Below is an example of what you want to see – no site errors. Site Errors are issues that affect your entire website, and should be prioritized.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed